Just a few days ago. it was reported that Intel is expected to launch its first discrete gaming GPUs based on the XE-HPG GPU architecture in 2021. The company plans to target the enthusiast gaming market. Intel will be directly competing against AMD’s upcoming RDNA 2 and NVIDIA’s Ampere GPU lineup.

Now, according to a latest Taiwanese report, Intel’s XE-HPG GPUs are going to be fabbed on TSMC’s foundry and will utilize TSMC’s 6nm process node. As published by the Taiwanese based outlet IThome (coming via @harukaze5719), Intel’s XE-HPG GPUs will be produced at TSMC’s foundry. The TSMC 6nm process node was mentioned before in the company’s latest roadmap, and is codenamed as ‘N6’. TSMC’s 6nm process tech will make use of an advanced/refined version of the EUV lithography tech, having 18% more logic density over TSMC’s 7nm (N7) process node. Intel is going to take 6nm process orders from TSMC.

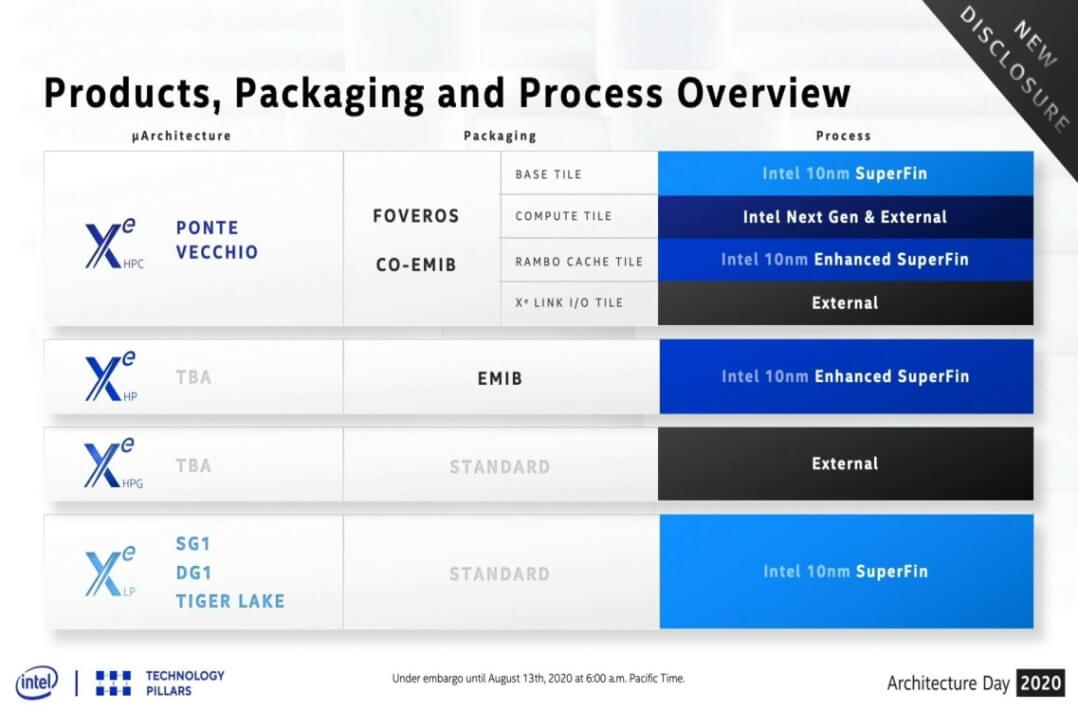

We expect the XE-HPG gaming GPUs to utilize a standard/simple packaging design, while the datacenter and HPC chips are going to use advanced packaging and innovative technologies such as EMIB, CO-EMIB, and FOVEROS. The XE-HPG architecture is going to be optimized for gaming, and Intel has been developing this sub-architecture since 2018. The XE-HPG is another category within the XE micro-architecture family, and it falls between the XE-LP and XE-HP sub-architecture, and will target the ‘Gaming’ market segment (mid-range to enthusiast).

XE-HPG arch was built upon three XE pillars: Xe-LP (Graphics Efficiency), Xe-HP (Scalability) and Xe-HPC (Compute Efficiency). These new chips should hopefully also support the GDDR6 memory type.

Intel has also confirmed their XE-HPG series of cards will support hardware-accelerated ray tracing/RTX. Nvidia already introduced Ray tracing with its Turing GPU lineup two years back and AMD’s upcoming RDNA2-based cards are also going to leverage hardware-level RTX support. So it makes sense for Intel to adopt this technology, though we don’t have any performance metrics of these new XE-HPG cards, to compare it with Nvidia’s current Turing lineup.

Hello, my name is NICK Richardson. I’m an avid PC and tech fan since the good old days of RIVA TNT2, and 3DFX interactive “Voodoo” gaming cards. I love playing mostly First-person shooters, and I’m a die-hard fan of this FPS genre, since the good ‘old Doom and Wolfenstein days.

MUSIC has always been my passion/roots, but I started gaming “casually” when I was young on Nvidia’s GeForce3 series of cards. I’m by no means an avid or a hardcore gamer though, but I just love stuff related to the PC, Games, and technology in general. I’ve been involved with many indie Metal bands worldwide, and have helped them promote their albums in record labels. I’m a very broad-minded down to earth guy. MUSIC is my inner expression, and soul.

Contact: Email