It appears that TweakTown has managed to benchmark two RX 6800 XT GPUs in a multi-GPU setup in DX12 mode. We all know by now, SLI and CrossFireX technologies are kind of dead in the water, and developers have been slowly abandoning these multi-GPU technologies, due to lack of proper scaling seen in games, and also because of some driver issues, among other factors like adoption rate.

Only NVIDIA’s current RTX 3090 flagship GPU supports the new NVLINK interface, as rest of the cards in the Ampere lineup lack the fingers, so we cannot SLI any of those cards either.

Each game developer also needs to code the game accordingly to take proper advantage of any SLI or CFX setup. However, DX-12 explicit multi-GPU support feature is not totally abandoned, but not many game developers have been using this m-GPU DX12 technology as well.

This will only help if GAMES are also going to take advantage of any new multi-GPU feature, provided the Game developers implement this in DX12 API.

Using a SINGLE powerful GPU is more likely a much better and feasible option for any high-end Gaming setup, instead of using two cards in tandem. Multi-GPU setup is usually not recommended for obvious reasons, but this article just focuses on the performance gains which can be expected in those games which support this DX12 explicit multi-GPU technology.

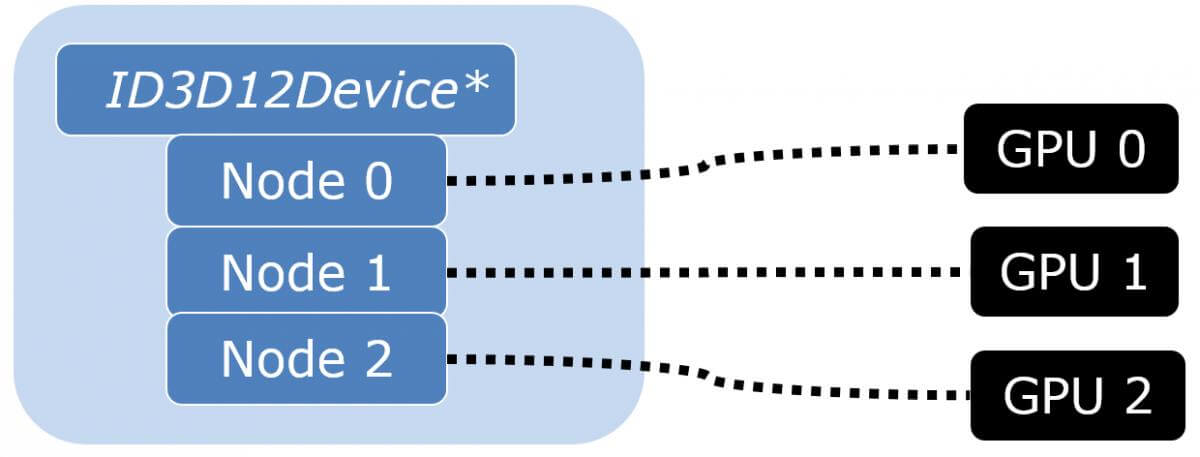

According to theory, explicit multi-GPU programming became possible with the introduction of the DirectX 12 API. In previous revisions of DirectX, the driver had to manage multiple SLI GPUs. Now, DirectX 12 gives that control to the application.

Since the launch of SLI, a long time ago, utilization of multiple GPUs was handled automatically by the display driver. The application always saw one graphics device object no matter how many physical GPUs were behind it. With DirectX 12, this is not the case anymore.

As rendering engines have grown more sophisticated, the distribution of rendering workload automatically to multiple GPUs has become even more problematic.

Namely, temporal techniques that create data dependencies between consecutive frames make it challenging to execute alternate frame rendering (AFR), which still is the method of choice for distribution of work over multiple GPUs.

Practically speaking, the display driver needs hints from the application to understand which resources it must copy from one GPU to another, and which it should not. Data transfer bandwidth between GPUs is very limited, and copying too much stuff can make the transfers the bottleneck in the rendering process.

Even when you didn’t have explicit control over multiple GPUs, you had to understand what happened implicitly and give the driver hints for doing it efficiently in order to get the desired performance out of any multi-GPU setup.

Now with DirectX 12, you can take full and explicit control of what is happening, and you are no longer limited to AFR mode either.

DirectX 12 exposes two alternate ways of controlling multiple physical GPUs. They can be controlled as multiple independent adapters where each adapter represents one physical GPU. Alternatively, they can be configured as one “linked node adapter” where each node represents one physical GPU.

However, it’s important to note that applications cannot control how it sees multiple GPUs. It cannot link or unlink adapters. The selection is done by the end user through the display driver settings.

In practice, the linked node mode is meant for multiple equal, discrete GPUs, i.e. classic SLI setups. It offers a couple of benefits. Within a linked node adapter, resources can be copied directly from the memory of one discrete GPU to the memory of another.

The copy doesn’t have to pass through system memory. Additionally, when presenting frames from secondary GPUs in AFR, there’s a special API for supporting connections other than PCIe.

Most of today’s multi-GPU setups use Alternate frame rendering/AFR.

In AFR, each GPU renders each of the other frame (either the alternate Odd or Even).

In SFR, each GPU renders half of every frame. (top/bottom, or plane division).

Anyways, coming back to the testing done by TweakTown, better scaling and decent performance gains can be observed in some of the games. The difference is more evident at 4K resolution, as it is more GPU-bound, instead of 1440p and 1080p. In one game tested, at 1440p, the scaling value even drops by 95% when mGPU is enabled.

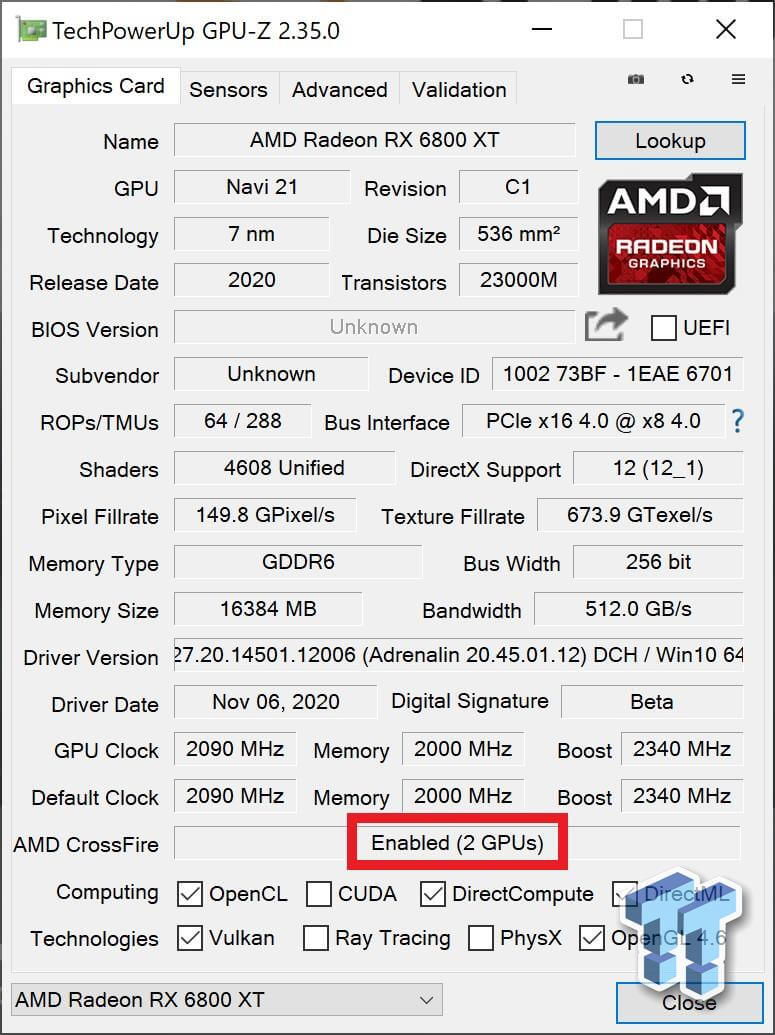

TweakTown tested two Radeon RX 6800 XT GPUs. One model is a reference card, and the other one a custom AIB card from XFX, the XFX Speedster MERC 319. Four PC games have been tested at 1080p, 1440p, and 4K. Sniper Elite 4, Strange Brigade, Rise of the Tomb Raider and Deus Ex: Mankind Divided.

These games take advantage of AMD’s technology, so the support for multi-GPU is obviously there. The mGPU support must be enabled via the Radeon software GPU driver, and TechPowerUp’s GPU-Z tool will display if this feature has been properly enabled or not, as shown in this screenshot.

We will try to focus mostly on the 1440p and 4K results, but when it comes to 1080p fps numbers, Sniper Elite 4 scored almost 586 FPS in mGPU. This is 200 FPS more than the GeForce RTX 3090 (386 fps), and just under twice as much as what a single RX 6800 XT GPU offers (312 fps).

TweakTown also tested dual Radeon RX 5700 XTs which delivered 294 FPS, while a single RX 5700 XT scored 151 FPS, in Sniper Elite 4. What we’re seeing here is a near-perfect scaling with the AMD graphics cards.

Depending on the game settings we are looking at a 101% to 156% performance scaling at 1080p resolution when dual RX 6800 XT cards are used, in all the 4 games which were tested.

Coming to 1440p results, Sniper Elite 4 scored a huge 448 FPS in mGPU setup, whereas a single RX 6800 XT card could only offer 232 FPS. The RTX 3090 GPU on the other hand gives 284 FPS on average, while the RTX 3080 scored 260 FPS.

Strange Brigade game again delivers an impressive 404 FPS in mGPU mode, while the RTX 3090 only achieves 257 FPS in comparison. Two Radeon RX 5700 XTs in mGPU mode on the other hand could only offer 220 FPS.

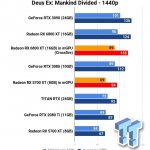

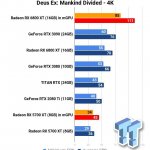

Deus Ex: Mankind Divided on the other hand had the worst scaling in m-gpu. A single RX 6800 XT outperformed two of these cards, scoring 124 FPS on average, while the mGPU crossfire mode could only provide 118 FPS.

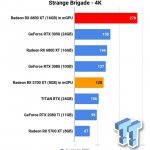

These are the 4K results. Strange Bridge had the highest scaling in the multi-GPU test, almost 193%. Strange Bridge scored 278 FPS on average in the m-GPU setup, whereas the RTX 3090 scored 158 FPS, and the single RX 6800 XT GPU could only offer 144 FPS on average.

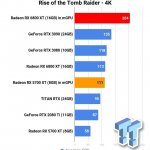

With Sniper Elite 4 we get around 255 FPS at 4K in m-GPU setup, while the Nvidia Geforce RTX 3090 GPU scored 171 FPS. A single RX 6800 XT GPU on the other hand offers 145 fps on average, almost the same as the RTX 3080.

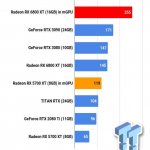

Rise of the Tomb Raider and Deus Ex: Mankind Divided also have great scaling at 4K, with ROTR hitting 204 FPS average at 4K in the m-GPU setup, whereas a single RX 6800 XT GPU only scored 113 FPS.

Deus Ex: Mankind Divided on the other hand scored 111 FPS in mGPU mode, while the single RX 6800 XT card could only provide 70 FPS.

As you can see DX12 multi-GPU setup with AMD cards does offer better scaling in games at 4K resolution, but like I mentioned before, going for a multi-GPU setup is not recommeneded, and this is not what an average gamer can also afford.

You would be better off buying a SINGLE powerful GPU rather than opting for an SLI or CFX setup, at least for Gaming. Less hassle, less power consumption, less heat output, and better performance in games, depending on the Game’s engine and GPU drivers, of course.

Source: TweakTown

Hello, my name is NICK Richardson. I’m an avid PC and tech fan since the good old days of RIVA TNT2, and 3DFX interactive “Voodoo” gaming cards. I love playing mostly First-person shooters, and I’m a die-hard fan of this FPS genre, since the good ‘old Doom and Wolfenstein days.

MUSIC has always been my passion/roots, but I started gaming “casually” when I was young on Nvidia’s GeForce3 series of cards. I’m by no means an avid or a hardcore gamer though, but I just love stuff related to the PC, Games, and technology in general. I’ve been involved with many indie Metal bands worldwide, and have helped them promote their albums in record labels. I’m a very broad-minded down to earth guy. MUSIC is my inner expression, and soul.

Contact: Email