Redfall is the latest Unreal Engine 4 that, thankfully, supports NVIDIA’s DLSS 3 AI upscaling tech. As such, we’ve decided to benchmark both DLSS 2 and DLSS 3 at 4K/Epic Settings, and share our impressions.

For our benchmarks, we used an AMD Ryzen 9 7950X3D, 32GB of DDR5 at 6000Mhz, and NVIDIA’s RTX 4090. We also used Windows 10 64-bit, and the GeForce 531.68 driver.

Redfall does not feature any built-in benchmark tool. So, for our tests, we used the game’s open-world area. This should give us a good idea of how the rest of the game works.

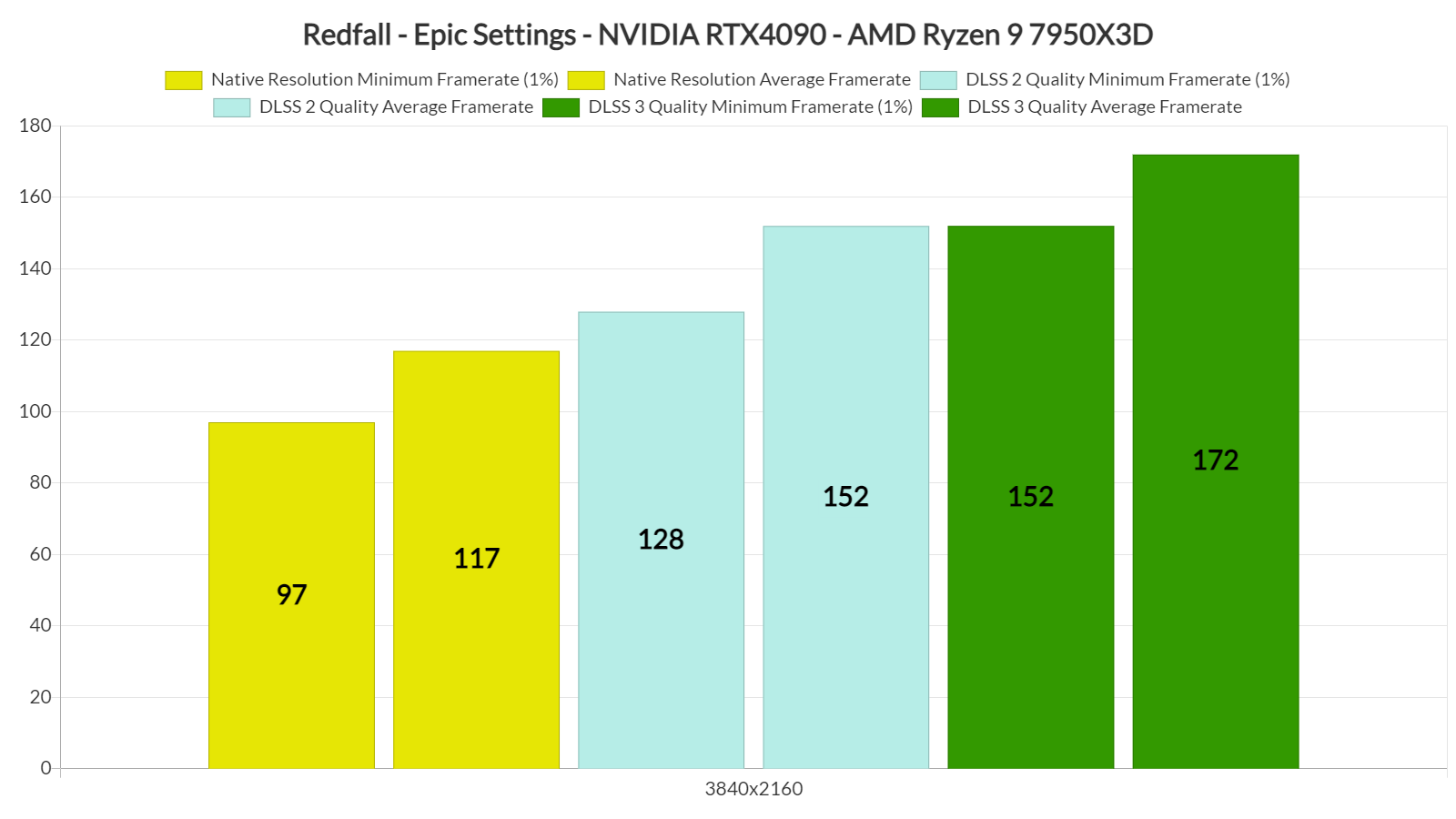

At Native 4K/Epic Settings, our NVIDIA RTX4090 was able to push a minimum of 97fps and an average of 117fps. By enabling DLSS 2 Quality, we were able to increase our minimum and average framerates to 128fps and 152fps, respectively. Then, using DLSS 3’s Frame Generation, we were able to get an additional 13-19% performance boost.

To be honest, I’m slightly disappointed by the performance boost of DLSS 3’s Frame Generation. Okay okay, we’re looking at framerates that are constantly higher than 150fps. However, I was expecting more.

Now the good news is that DLSS 3’s Frame Generation does not bring any noticeable extra latency or visual artifacts. Thus, on a high-end CPU, this is simply free performance.

I should also note that Redfall features different mouse sensitivity values when exploring the environments and when ADS (Aiming Down Sights). Players may need some extra time in order to get used to it. However, this happens whether you enable DLSS 3 or not. The game does not have any mouse acceleration issues.

All in all, Redfall’s DLSS 3 implementation seems to be fine. DLSS 3 does not bring any additional stutters to the game, does not introduce extra latency, and players won’t be able to spot any visual artifacts. Owners of older CPUs may be able to double the game’s performance, however, owners of high-end CPUs may only get a 20% performance boost. Still, everything appears to be working great here, so there is nothing to complain about!

John is the founder and Editor in Chief at DSOGaming. He is a PC gaming fan and highly supports the modding and indie communities. Before creating DSOGaming, John worked on numerous gaming websites. While he is a die-hard PC gamer, his gaming roots can be found on consoles. John loved – and still does – the 16-bit consoles, and considers SNES to be one of the best consoles. Still, the PC platform won him over consoles. That was mainly due to 3DFX and its iconic dedicated 3D accelerator graphics card, Voodoo 2. John has also written a higher degree thesis on the “The Evolution of PC graphics cards.”

Contact: Email